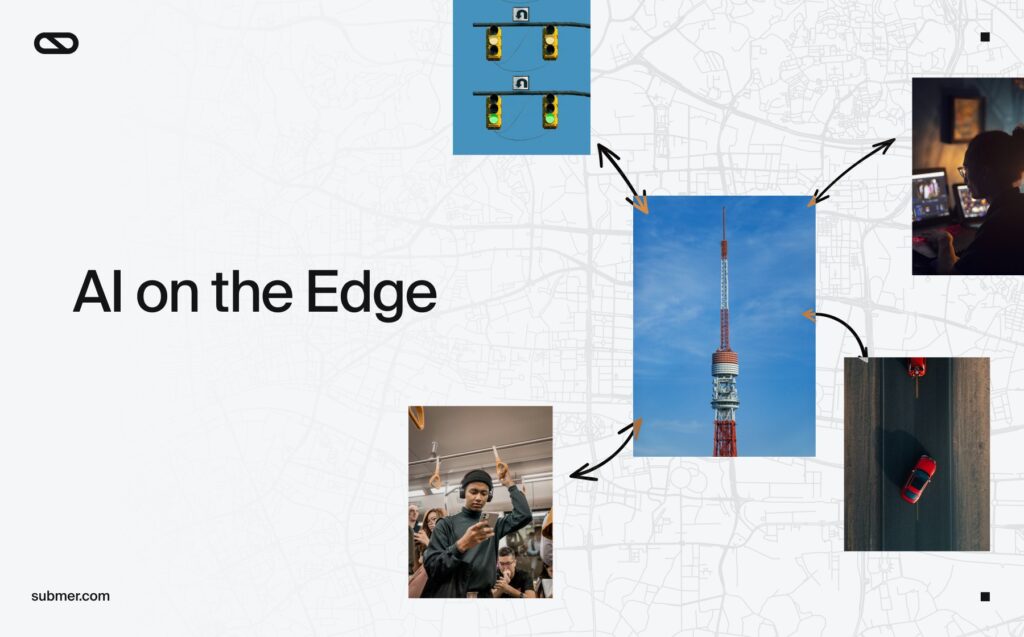

A few weeks ago, we highlighted several key datacenter trends for 2026. With Mobile World Congress 2026 just finished – where edge compute took center stage, it’s worth taking a closer look at one of those trends: the era of edge AI infrastructure.

Processing data closer to the source dramatically reduces latency, creating faster and more seamless digital experiences. While that advantage is valuable in almost any digital environment, for some applications, low latency is not simply beneficial; it is essential.

Why edge AI infrastructure matters

Cloud gaming is a clear example. Instead of running a game locally, players stream the experience from a remote GPU server. Any noticeable delay between player input and game response can disrupt gameplay, making latency one of the most critical performance factors.

Running those GPU workloads on servers located as close as possible to the user dramatically improves performance. With the recent acquisition of Radian Arc, Submer is already seeing the impact of edge-based GPU infrastructure embedded within telecom networks, enabling cloud gaming services for telcos and their customers.

Edge AI across industries

Distributed edge AI infrastructure allows organizations to process and analyze data closer to where it is generated, enabling faster insights and responses. Edge AI is becoming increasingly important across industries. There are myriad use cases.

• Automotive systems using AI for real-time navigation and driver monitoring

• Industrial IoT enabling predictive maintenance and production quality control

• Healthcare wearables providing immediate analysis of patient data

• Smart cities using connected sensors to manage traffic and security

• Retail and agriculture applications driven by real-time data from sensors and devices

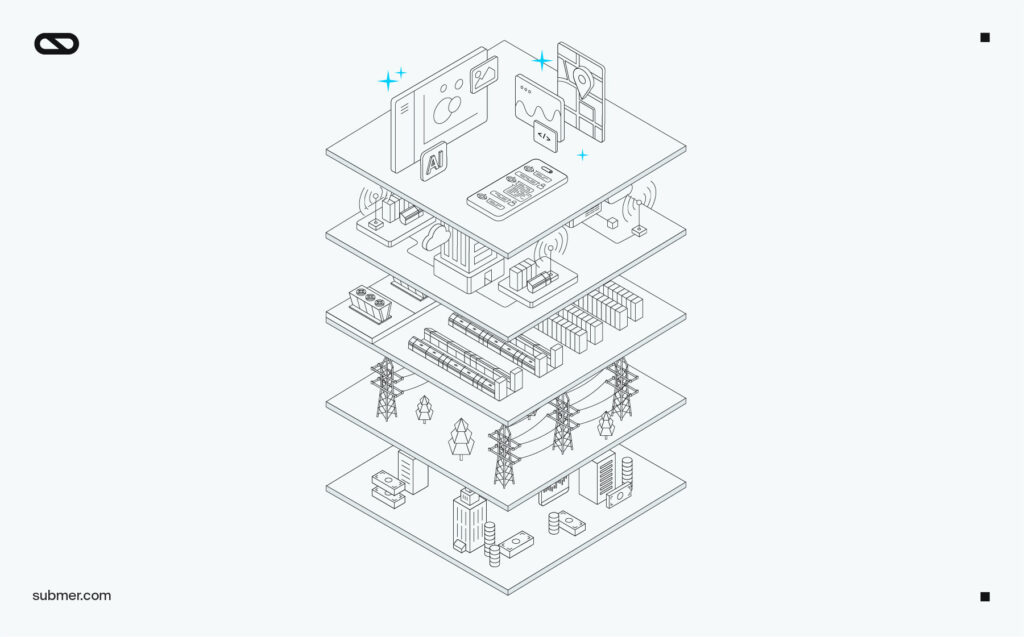

Core-to-edge AI architecture

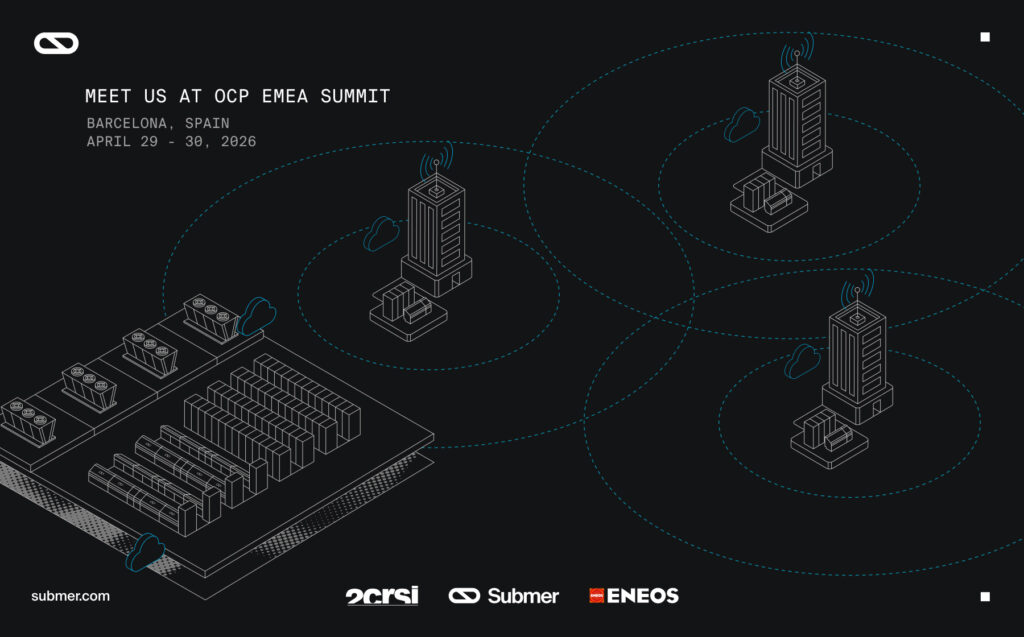

When it comes to AI infrastructure, the most effective model is emerging as a core-to-edge architecture, with large cloud datacenters supported by networks of edge compute nodes.

These edge nodes provide a huge opportunity for telecom companies to expand their offering, moving beyond connecting users to cloud compute and instead providing the compute itself. Large-scale AI datacenters provide centralized compute power, while distributed edge nodes deliver real-time inference and localized processing. This shift creates a major opportunity for telecom operators.

A new opportunity for telecom operators

Telcos can implement edge compute nodes throughout their networks, delivering localised GPU-as-a-Service solutions that provide low-latency data processing to local users. That GPU bandwidth can be utilised and monetized as required, whether that be for cloud gaming subscriptions or AI inferencing workloads. But that localised GPU compute also opens the door to a very important opportunity – AI sovereignty.

Countries and territories all over the globe are beginning to worry about their reliance on foreign entities to provide the AI and cloud infrastructure that their citizens need. Ensuring that the full AI stack that a country or territory relies on is wholly owned and operated within that territory is key to AI resilience.

But that’s only half of the issue. Data regulations like the EU’s GDPR insist that personal data is stored and processed locally, with tight controls on access. However, legislation such as the US Cloud Act empowers US law enforcement to compel US cloud companies to provide data – including personal data – regardless of where that data is stored and processed. The result could be a legal conflict between territorial regulation and legislation.

EU countries that build out full-stack AI solutions, however, can ensure AI resilience, while also avoiding any conflicts of data regulation that could arise from engaging with foreign cloud providers.

Telecom companies can deploy edge compute nodes at scale, providing a relatively simple solution to the sovereign AI challenge, while also laying the foundation for valuable new revenue models.

Submer & inferX enabling the core-to-edge AI infrastructure

Submer and its AI cloud and edge company, inferX are helping enable the transition toward distributed AI environments.

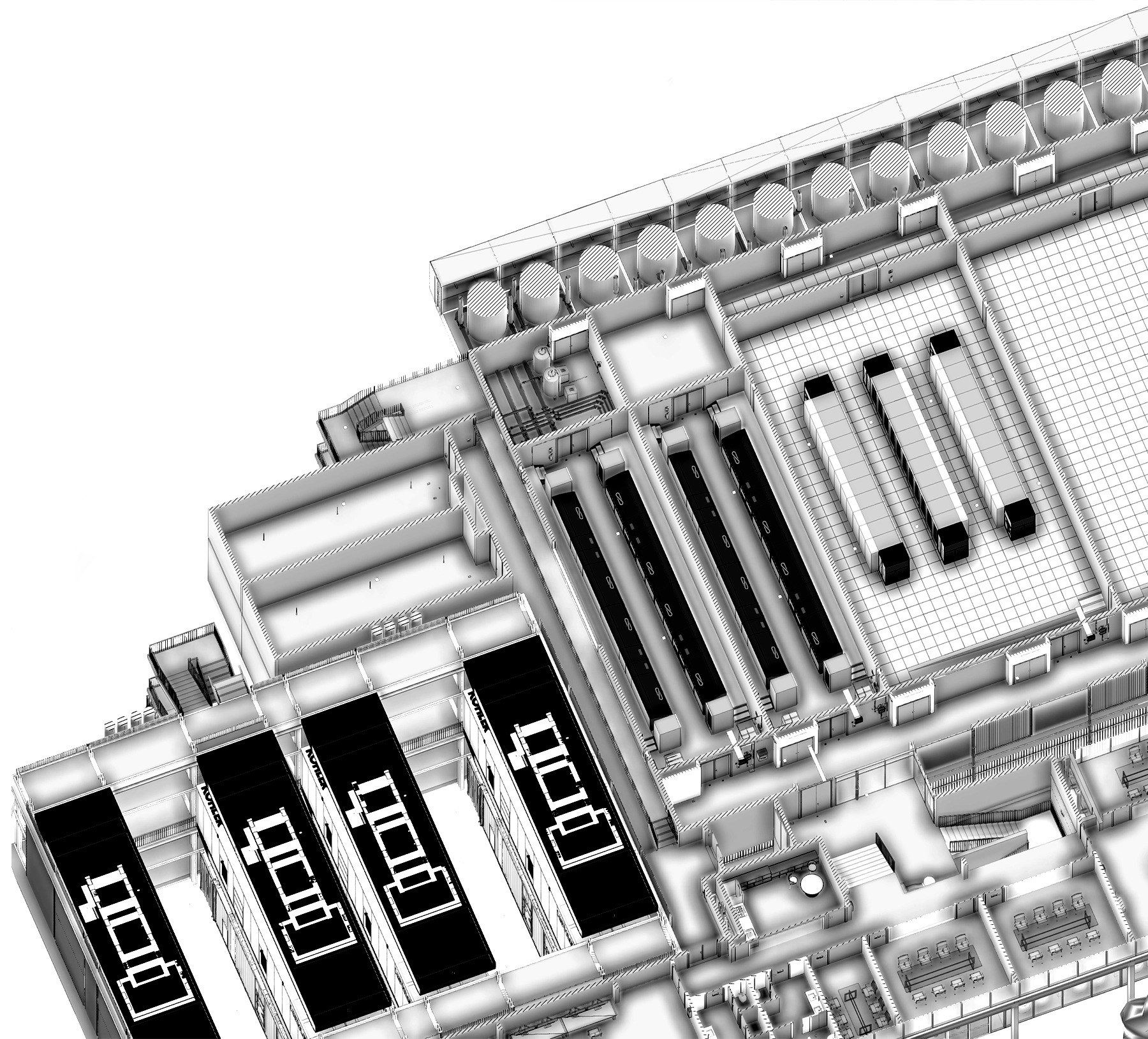

Submer deliver the full-stack AI functionality to telcos, providing new customer solutions and revenue streams, as well as creating a robust sovereign AI roadmap that gives nations the resilience and regulatory defense that they require. We’ve built an ecosystem that’s designed to deliver the core-to-edge infrastructure to power full-stack sovereign AI compute solutions, from large-scale high-density datacenters at the core, to low-latency AI factories at the edge.

Our design and build capabilities enable us to deploy large-scale AI datacenters at speed, while our NVIDIA Cloud Partner, inferX, is positioned to leverage that infrastructure to deliver AI-as-a-Service solutions at scale. And our recent acquisition of Radian Arc provides an established footprint across the telco landscape that’s already driving strong revenue through cloud gaming.

The age of AI is accelerating, and the need to own and control that AI infrastructure has never been more important. At Submer, we understand the need for sovereign AI solutions at both national and enterprise levels, and we can deliver the core-to-edge infrastructure to power it.