While it may feel like the current age of AI came out of nowhere, it was far more of an evolutionary journey, building on the foundations of centralised high-performance computing, the ubiquity of the cloud and the ever-increasing compute density resulting from more efficient fabrication processes. What is different now is the speed and scale – AI infrastructure isn’t evolving incrementally, but being deployed at industrial scale.

As AI platforms have become more complex, the need for dedicated infrastructure, built from the ground up to execute and accelerate AI workloads, is vital to the development and implementation of more advanced AI solutions. Whether that be the hugely performant, high-density compute at scale for training LLMs, or the low-latency localised processing for near-instantaneous inference.

But even that dedicated AI infrastructure is often built and operated in a fragmented manner, with multiple stakeholders owning parts of the overall platform on which those AI workloads are run. Building a full-stack AI infrastructure solution gives enterprises and even governments complete ownership and control over their AI capabilities.

The need for faster AI infrastructure deployment

Speed of deployment is a major concern when it comes to AI infrastructure, as the appetite and need for AI applications and solutions continues to build momentum. The traditional 24 – 36 month build cycle for datacenters simply doesn’t work through an AI lens, especially when the hardware needs of AI extend well beyond core cloud datacenters.

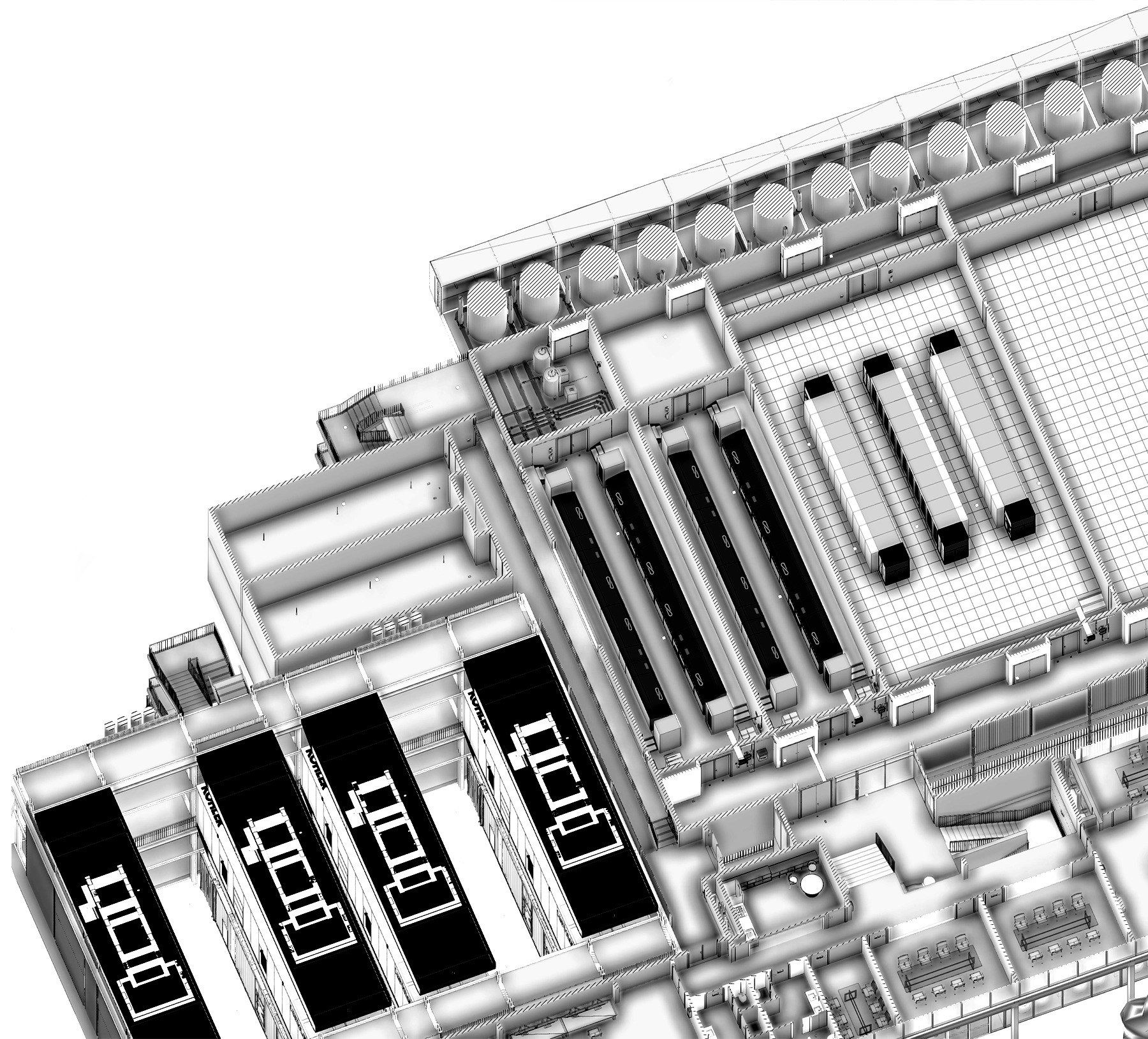

Modular datacenter builds based on reference designs allow for faster and more scalable deployments from the core to the edge. This modular design and build structure is key to delivering full-stack AI infrastructure, while also compressing timelines.

Full-stack infrastructure is crucial for sovereign AI

Another, arguably even more important reason to invest in full-stack AI infrastructure, is to ensure AI and data sovereignty. AI is no longer a curiosity, it’s an imperative at both enterprise and national levels – organisations and governments must be able to own and control their own AI solutions, without any reliance on outside or foreign entities.

By building and controlling full-stack AI infrastructure enterprises and governments can ensure AI resilience, without any external factors influencing operation or functionality. As reliance on AI continues to grow, that resilience becomes exponentially more crucial – it’s fair to say that AI resilience will soon – if not already – be considered an issue of national security for many countries and territories.

Data sovereignty, along with data privacy laws and regulations are also key considerations when developing AI strategies. Many countries and territories have strict rules regarding data privacy, especially when it comes to personal data. The EU’s GDPR is one of the most rigorous privacy regulations in the world, putting significant protections in place for EU citizens’ personal data. But working with cloud and AI providers located outside of the EU can potentially cause data privacy issues.

The US Cloud Act, for example, empowers law enforcement agencies within the US to compel any US-based company to surrender data regardless of where that data resides, or the nationality of the individuals it pertains to. This is just one example of how conflicting international legislation and regulation could cause data privacy problems, but it clearly highlights the importance of data sovereignty. Building full-stack AI infrastructure within the borders of a country or territory, with no reliance on, or influence from foreign entities, circumvents any such potential issues.

The full-stack AI approach

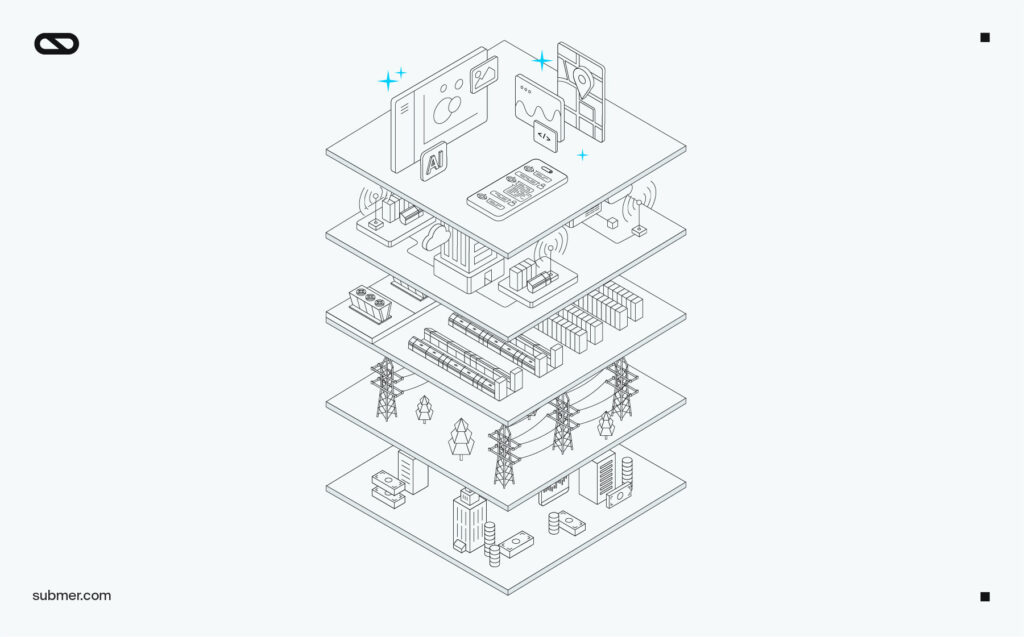

So, what constitutes full-stack AI infrastructure? In essence, we’re talking about building out every part of the AI equation, from initial consultancy and planning, through to the ultimate deployment and operation of a core-to-edge AI infrastructure platform. But between those two end points are a lot of additional stages, like acquiring land and power, designing and building the core datacenter and fitting it out with MEP units, liquid cooling solutions and the required server hardware, before bringing it all into an operational state.

And that’s just the core datacenter. You then need to factor in the edge compute nodes, creating myriad spokes from that core hub, and providing targeted, low latency processing of localised data, to deliver AI inference at scale and at speed. Meanwhile, high-speed connectivity is essential in all directions, making network efficiency and resilience equally vital.

When you break down the process and examine all the stages and the expertise that’s necessary for each of those steps, that 24 – 36 month timeline becomes more understandable, or worse still, inevitable. But while the overall challenge looks daunting, it doesn’t have to be, and that timeline isn’t as inevitable as it might seem.

The key to successfully building out full-stack AI infrastructure is working with a partner that can orchestrate the entire project from end-to-end and ensure that all the relevant expertise is on-tap when needed. A partner with extensive experience designing and manufacturing core components within the ecosystem, and the ability to oversee and manage the entire supply chain, to ensure that every step of the journey is delivered on time and to spec. A partner that can deliver a tried and tested modular datacenter build strategy, expediting deployment and streamlining scalability. And a partner that has strong telco partnerships to ensure that vital high-speed connectivity is in place.

Submer’s full-stack solution

Submer delivers on that vision – orchestrating the design, build, deployment and operation of your full-stack AI infrastructure solution. With over a decade at the forefront of liquid cooling, modular datacenter design and build capabilities, in-group edge compute expertise, and key ecosystem partnerships, Submer designs and delivers full-stack AI infrastructure to your specification.

And remember that worrying 24 – 36 month deployment timeline for core datacenters? How does 9 – 12 months sound, instead?

The AI race isn’t slowing down, and the need to own and control every part of AI infrastructure is becoming ever-more important for enterprises and governments, as is the need to work with a partner that can orchestrate that full-stack solution from end-to-end.