What does it take to build efficient, future-ready AI infrastructure? As the industry accelerates into the inference era, this question is becoming increasingly urgent. While perspectives vary, one point is clear: the need for resource-optimised AI infrastructure has never been greater.

The challenge is clear. Recent reports show that AI-driven datacenter electricity consumption has grown by around 12% per year since 2017 – more than four times the rate of overall global electricity demand growth.

What intelligence per watt really means

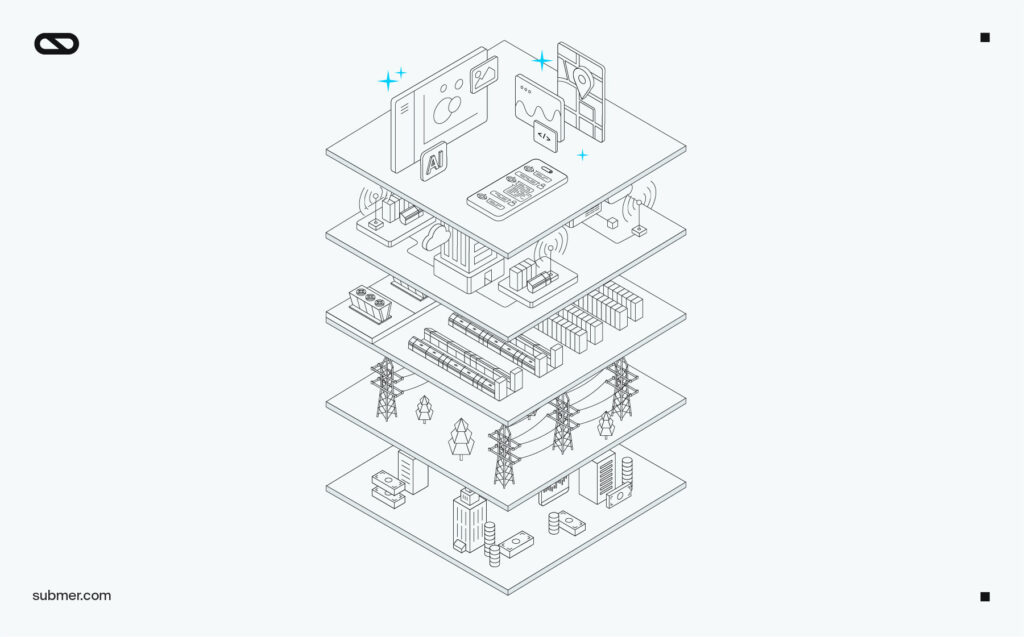

The environmental case is unambiguous, but efficiency in AI infrastructure is not sustainability storytelling, it’s infrastructure efficiency at system scale to maximize intelligence per watt across the lifecycle. As AI systems move into an agentic phase of inference – where models continuously interpret, decide and act in real time – infrastructure requirements are shifting fundamentally. This transition increasing compute demand and placing sustained pressure on energy, latency and system efficiency across the entire stack. NVIDIA illustrates the new AI infrastructure as having “5 layers” with energy at level 1, because the system can only produce as much intelligence as the power needed to generate it.

Energy’s foundational role in the stack means renewed focus on intelligence per watt (IPW). Higher IPW allows AI models to achieve the same – or better – performance with less electricity. This reframes AI’s position as an unsustainable energy burden into a system that can drive efficiency gains at scale, provided infrastructure is designed correctly. Full stack AIs with higher IPW are better placed to make the bridge to inference applications, by – for example, performing optimized resource management in areas such as smart grids, maximizing renewable energy generation or reducing waste in industrial processes. This shift toward efficiency is also influencing where and how AI workloads are deployed. In the inference era, infrastructure efficiency goes beyond operations, shaping capital efficiency, deployment speed and long-term scalability.

The role of edge in AI efficiency

Research shows that deploying smaller, more specialized local AI processing at the edge can reduce energy consumption by up to 60-80% compared to large, general-purpose models that run in central cloud datacenters. This decentralization, which enables AI applications to be smaller, faster and have a higher IPW, is a compelling argument for developing datacenters that prioritize AI efficient model architectures and hardware over simply building larger, more resource-intensive systems.

But efficiency in AI infrastructure is not simply a question of centralization versus edge. Efficiency in AI infrastructure is not limited to energy. It extends to how materials, capacity and lifecycle decisions are managed over time. A sustainable datacenter is one that delivers the operational efficiency gains along with the required performance, therefore lowering IPW and Total Cost of Ownership (TCO).

How Submer enables high IPW infrastructure

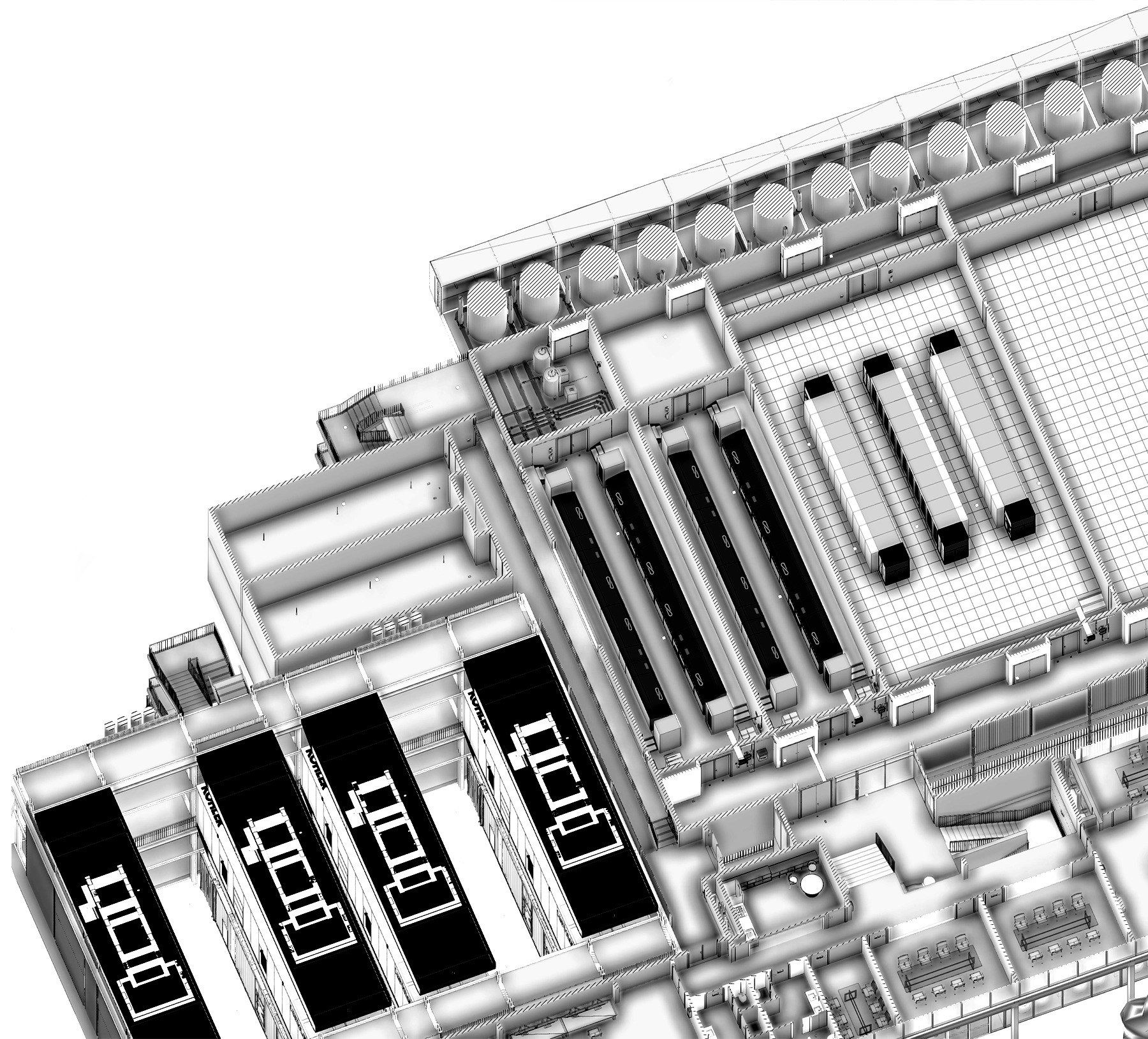

Submer has evolved into an end-to-end AI datacenter infrastructure company focused on maximizing performance per watt across the full lifecycle. Through its inferX division and broader infrastructure portfolio, Submer Group delivers a complete, sovereign AI infrastructure stack – from facility design and liquid cooling through to GPU cloud services at the telco edge. One of the Group’s core values is a commitment to the measurable reduction of impact, backed by transparent methodology and continuous improvement. The environmental footprint of AI compute is reduced through liquid-cooled datacenter architectures, which results in demonstrably lower energy use, water consumption and emissions compared to traditional air cooling.

Additionally, flexible, modular architecture reduces upgrade cycles, operational risk and stranded assets, and the lifecycle approach preserves asset value and extends equipment life, all of which helps to reduce environmental impact. This focus on efficiency and lifecycle optimization delivers measurable impact:

- Energy savings: 913.68 GWh

- Water savings: 3,653.95 million litres

- GHG emissions saved: 323.11k tCO2e

As AI becomes as essential as electricity or the internet, its infrastructure must meet the same standard: delivering more with less. Performance per watt is the defining constraint of modern computing infrastructure. At Submer, this is the foundation for building AI infrastructure that is not only more efficient, but economically and environmentally sustainable by design.