In this post, we’re going to cover some of the most common cooling solutions for datacenters and also some new trends. Over the years, datacenters have seen substantial changes in how server room cooling was managed. Years back (say 15-20 years back), nobody really cared how much power was consumed to actually cool a server room. It’s just something that had to be done and the cooler the better. Then, all of a sudden somebody started talking about PUE’s (Power Usage Effectiveness) and they discovered that most of the Data Centers had PUE’s of 2.5 or even 3. Crazy times…!

For the last 10 years, we’ve seen a major focus on reducing datacenter PUE. It started simple with some minor “hacks” in the server room (like rack filler panels or simple precision cooling techniques) and now we’ve finally reached the point where you “need to be a green datacenter” and free air cooling are a must.

For now, let’s just get an overlook at the most common datacenter cooling methods around and a brief analysis of their pro’s and con’s:

Air-based Cooling

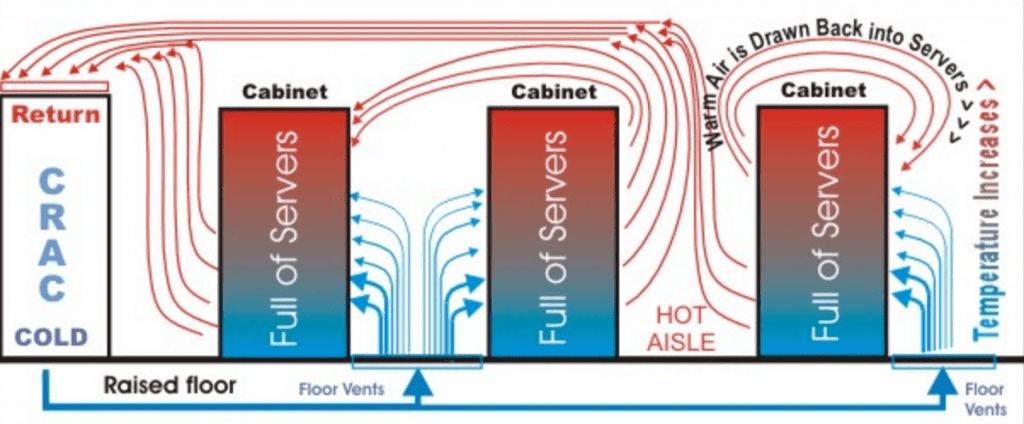

1. Cold Aisle / Hot Aisle

We like to call it “the convection mayhem“. This is by far the most common cooling method across most of the worlds’ Data Centers and also one of the most inefficient. It’s so simple to implement and follows the principle of “the more power you burn, the cooler the server room will be“.

By facing the inlet sides of the racks (cold aisles) with the outlet sides (hot aisles), you’re supposedly separating the cold air from mixing with the hot. As you can imagine, this is far from reality and what you can really notice in these server rooms and Data Centers are hot and cold air currents fighting with each other in a fierce-some and never-ending battle. The worst of all is that cold air never wins and you end up having to pump crazy amounts of it to try and get rid of the “hot spots” generated across the heavier-loaded racks. One of the biggest limitations with this method is precisely those heavy-loaded racks, you simply can’t have them.

Good news is that there’s a clear trend to move away from this inefficient and energy-consuming monster.

2. Cold or Hot Aisle Air Containment

We’ve been seeing this trend for at least 5 or 6 years now and it’s one of the easiest to retrofit into the previous method we’ve discussed. It’s achieved by physically isolating the possibility of the hot or cold air mixing and driving it directly from and to the CRAC unit.

The truth is, it actually works pretty well and reduces substantially the issues with “hot spots” and air mixing. Of course, the downsides are that you still need to control the pressures of your plenums (and everything that goes with it) and that you’re cooling or heating large areas that you really don’t need to.

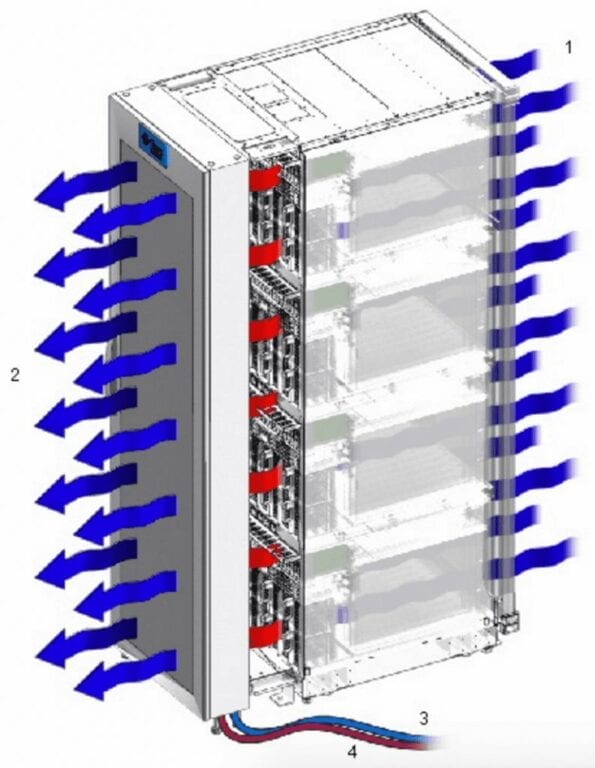

3 . In-Rack Heat Extraction

This is where things start to get creative. There’s plenty of similar solutions on the market. The idea behind this Data Center (or better said, rack) cooling method is to extract the heat that’s generated inside the rack so that it doesn’t even go into the server room.

Another common variant is having the actual compressors and chillers inside the rack itself, taking the heat directly to the exterior of the Data Center. An upside is that you have a nice and fresh server room. The downside is that you still can’t get very high computational density per rack and that you end up with very complex and hard to maintain setup without much improvement in your PUE.

Liquid Based Cooling

4. Water Cooled Racks and Servers

Water is widely used to cool all kinds of machinery and industrial systems, but what about being used to directly cool servers in a Data Center? Why isn’t it a widespread solution?

The answer is pretty simple: Water + Electricity = Disaster.

The risk of leakage across the server room is way too high and disaster is the guaranteed effect. It’s easy to find solutions on the market that guide water in a “secure way” to server components (aka, water cooling prepared servers) and also racks that pump water through the “hot side” to lower the temperature of the air before it goes into the server room. Both of these solutions have been used for very specific projects.

Check out the IBM iDataPlex Direct Water Cooled dx360 M4 or the 20 kW Water Cooled Rack Door created by Over IP Group.

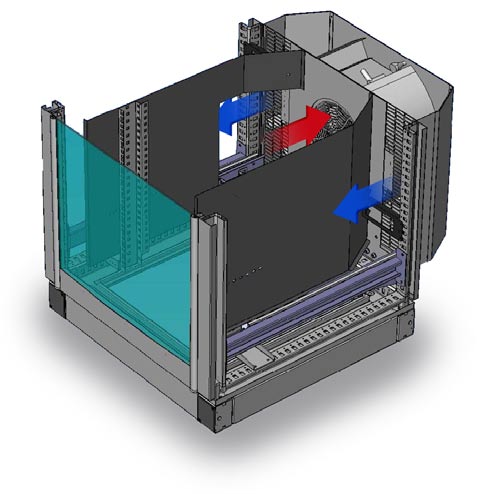

5. Liquid Immersion Cooling

Liquid Immersion Cooling implies using a dielectric coolant fluid to gather the heat from server components. Using dielectric fluid means that we can put it in direct contact with electrical components (like CPU’s, drives, memory, etc.).

Liquid coolant running through the hot components of a server and taking the heat away to a heat exchanger is hundreds of times more efficient than using massive CRAC (Computer Room Air Conditioning) devices to do the same. Unprecedented PUE’s are possible by implementing this datacenter cooling method in comparison to all the rest.

There two types of Liquid Immersion Cooling:

- Indirect Liquid Cooling: where the coolant liquid never actually enters in contact with the components (pretty much the same then as seen in the custom water-cooled servers). Avoiding the contact of the liquid with the components makes this approach much less efficient.

- Direct Liquid Cooling: where the coolant liquid is put in direct contact with the servers’ components. Under this category there are two sub-techniques:

- Single-phase open bath immersion liquid cooling: the coolant liquid never changes state.

- Two-phase closed or semi-open immersion liquid cooling: the coolant liquid changes state from liquid to gas and back in a constant loop.

To learn more about the differences between single-phase and two-phase immersion cooling, click here.

At Submer, we’ve developed a single-phase biodegradable liquid coolant that’s clean for the environment and non-hazardous for datacenter personnel. We favour the open bath single-phase approach as it’s much more practical to work with in day-to-day operations.

Thanks to the design of the Submer SmartPodX, the tools and services created around it, having your servers in dielectric coolant or in an air-conditioned server room will represent similar efforts from a datacenter operations point of view but will reduce your Data Center construction and running costs (CAPEX and OPEX) by ~45%.

Contact us and discover how to cut a lot of costs.

A special thanks to Diarmuid Daltún